|

|

Software Zebra | Matrox Aurora Imaging Library X

DescriptionAurora® Imaging Library, formerly Matrox Imaging Libray, is a comprehensive collection of software tools for developing machine vision and image analysis applications. Aurora Imaging Library includes tools for every step in the process, from application feasibility to prototyping, through to development and ultimately deployment. The software development kit (SDK) features interactive software and programming functions for image capture, processing, analysis, annotation, display, and archiving. These tools are designed to enhance productivity, thereby reducing the time and effort required to bring solutions to market. Image capture, processing, and analysis operations have the accuracy and robustness needed to tackle the most demanding applications. These operations are also carefully optimized for speed to address the severe time constraints encountered in many applications. Aurora Imaging Library at a glance:

Aurora Imaging Library development First released in 1993, Aurora Imaging Library has evolved to keep pace with and foresee emerging industry requirements. It was conceived with an easy-to-use, coherent API that has stood the test of time. Aurora Imaging Library pioneered the concept of hardware independence with the same API for different image acquisition and processing platforms. A team of dedicated, highly skilled computer scientists, mathematicians, software engineers, and physicists continue to maintain and enhance Aurora Imaging Library. Aurora Imaging Library is maintained and developed using industry recognized best practices, including peer review, user involvement, and daily builds. Users are asked to evaluate and report on new tools and enhancements, which strengthens and validates releases. Ongoing Aurora Imaging Library development is integrated and tested as a whole on a daily basis.

Aurora Imaging Library SQA In addition to the thorough manual testing performed prior to each release, Aurora Imaging Library continuously undergoes automated testing during the course of its development. The automated validation suite—consisting of both systematic and random tests—verifies the accuracy, precision, robustness, and speed of image processing and analysis operations. Results, where applicable, are compared against those of previous releases to ensure that performance remains consistent. The automated validation suite runs continuously on hundreds of systems simultaneously, rapidly providing wide-ranging test coverage. The systematic tests are performed on a large database of images representing a broad sample of real-world applications.

Latest key additions and enhancements:

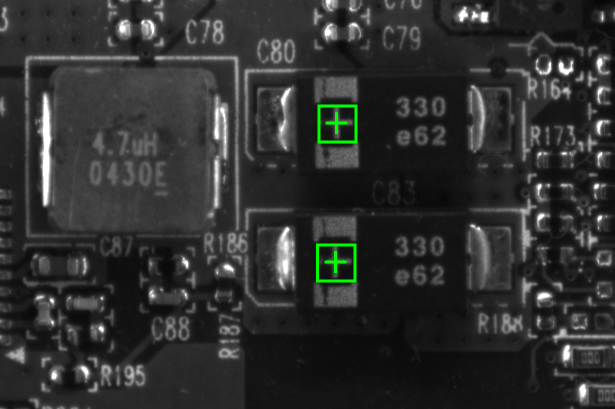

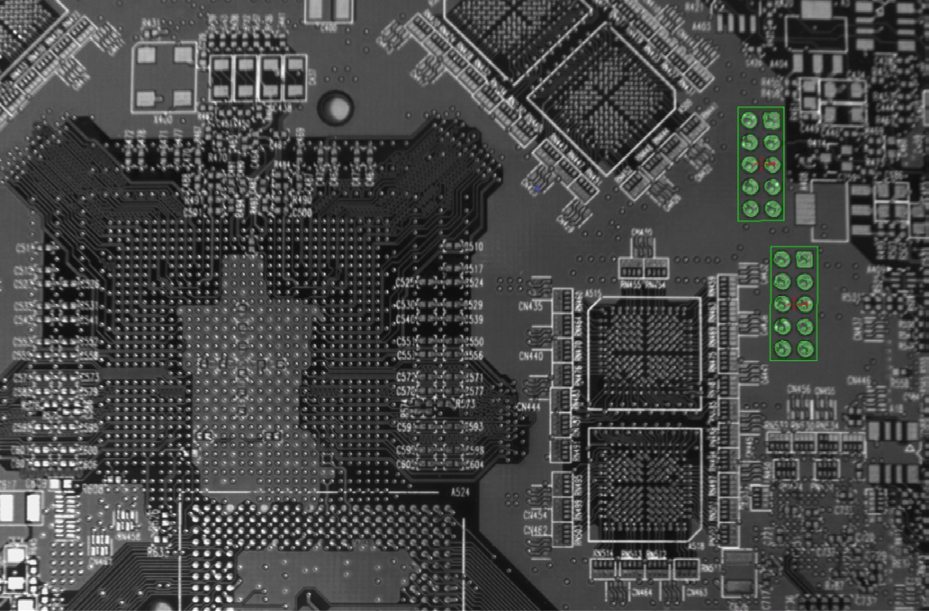

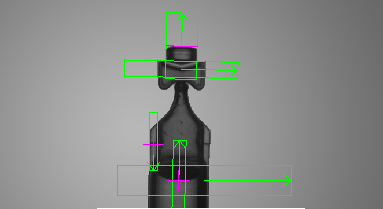

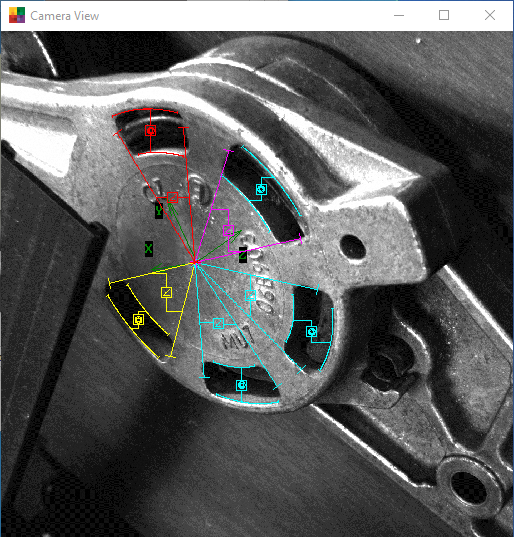

Technical specificationField-Proven Vision Tools: Image analysis and processing tools Central to Aurora Imaging Library are tools for calibrating; clas- sifying, enhancing, and transforming images; locating objects; extracting and measuring features; reading character strings; and decoding and verifying identification marks. These tools are care- fully developed to provide outstanding performance and reliability, and can be used within a single computer system or distributed across several computer systems. Pattern recognition tools Aurora Imaging Library includes three tools for performing pattern recognition: Pattern Matching, Geometric Model Finder (GMF) and Advanced Geometric Matcher (AGM). These tools are primarily used to locate complex objects for guiding a gantry, stage, or robot, or for directing subsequent measurement operations. The Pattern Matching tool is based on normalized grayscale correlation (NGC), a classic technique that finds a pattern by looking for a similar spatial distribution of intensity. A hierarchical search strategy lets this tool very quickly and reliably locate a pattern, including multiple occurrences, which are translated and slightly rotated, with sub-pixel accuracy. The tool performs well when scene lighting changes uniformly, which is useful for dealing with attenuating illumination. A pattern can be trained manually or determined automatically for alignment. Search parameters can be manually adjusted and patterns can be manually edited to tailor performance.

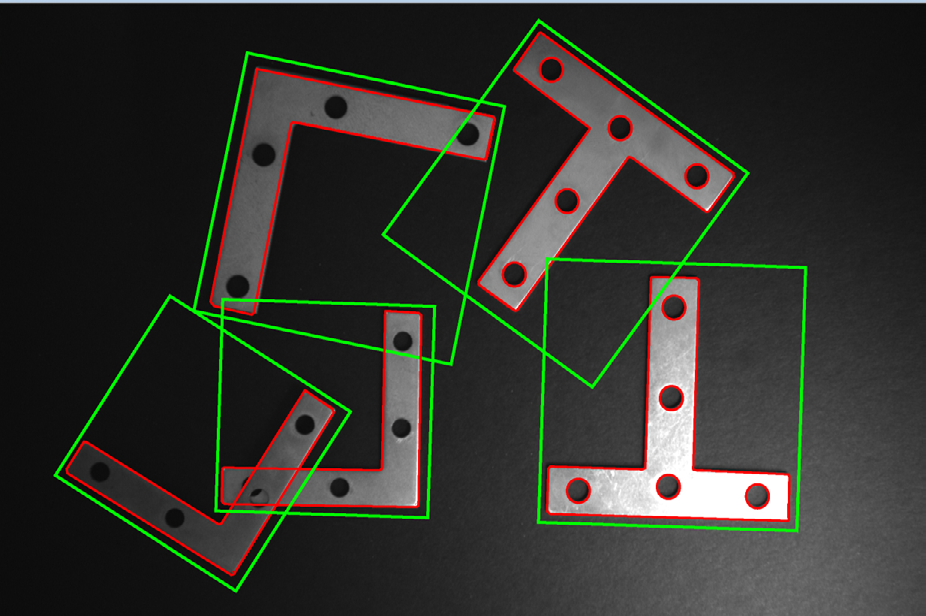

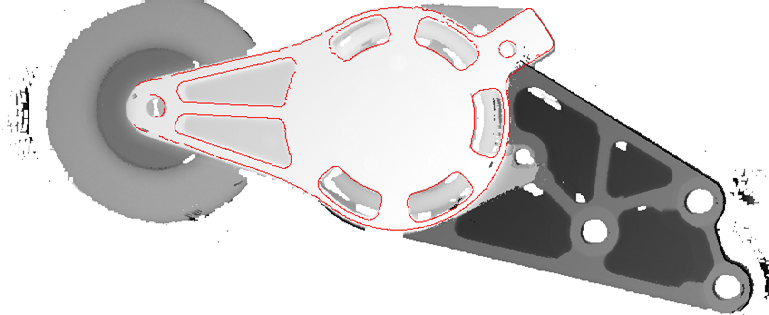

The GMF tool uses geometric features (e.g., contours) to find an object. The tool quickly and reliably finds multiple models—includ- ing multiple occurrences—that are translated, rotated, and/or scaled with sub-pixel accuracy. GMF locates an object that is partially miss- ing and continues to perform when a scene is subject to uneven changes in illumination, thus relaxing lighting requirements. A model can be trained manually from an image, obtained from a CAD file, or determined automatically for alignment. A model can also be obtained from the Edge Finder tool, where the geometric features are defined by color boundaries and crests or ridges in addition to contours. Physical setup requirements are eased when GMF is used in conjunction with the Calibration tool as models become indepen- dent of camera position. GMF parameters can be manually adjusted and models can be manually edited to tailor performance.

The new generation AGM tool uses edges and related metrics to dependably locate one or more occurrences of a model that are subject to translation, slight rotation, minor scale differences, and partial occlusion. A model is defined from a single image or trained with user assistance from multiple images. The latter delivers great- er robustness for a model that varies naturally and is searched in a cluttered scene.

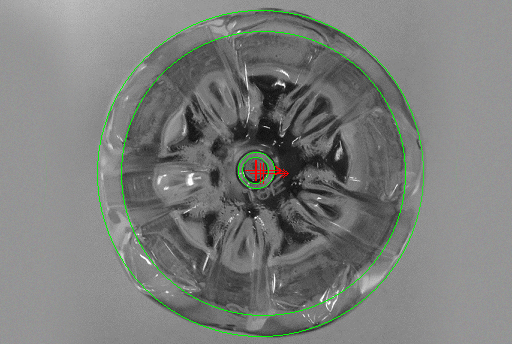

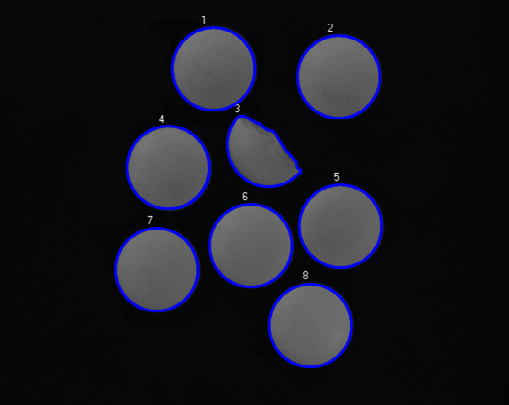

Shape finding tools The GMF tool in Aurora Imaging Library includes dedicated modes for finding circles, ellipses, rectangles, and line segments. These modes use the same advanced edge-based technique to locate one or more occurrences of any size—including ones within another for circles, ellipses, and rectangles. Circle finding is defined by the anticipated radius, the possible scale range, and the number of expected occurrences.

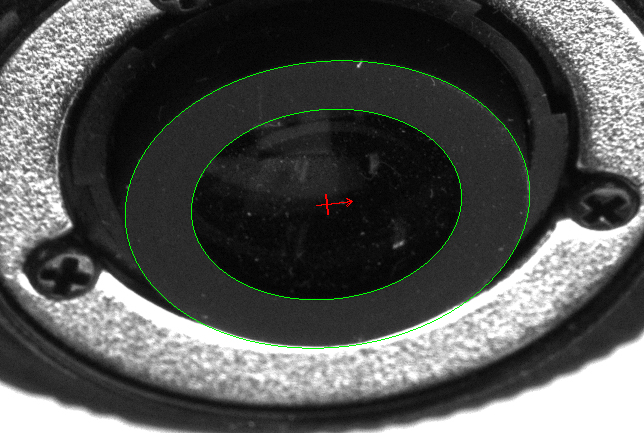

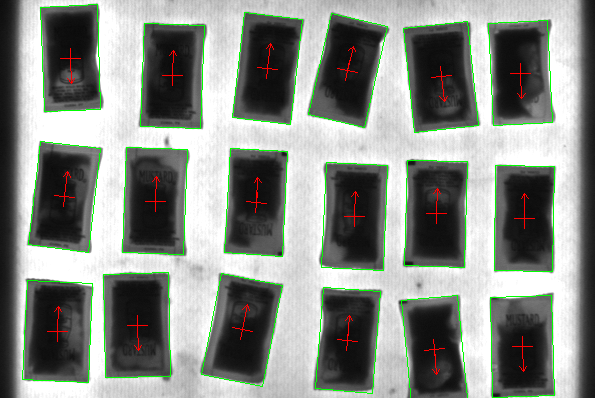

Ellipse and rectangle finding are defined by the anticipated width and height, the possible scale and aspect ratio ranges, and the number of expected occurrences.

Line segment finding is defined by the anticipated length and the number of expected occurrences..

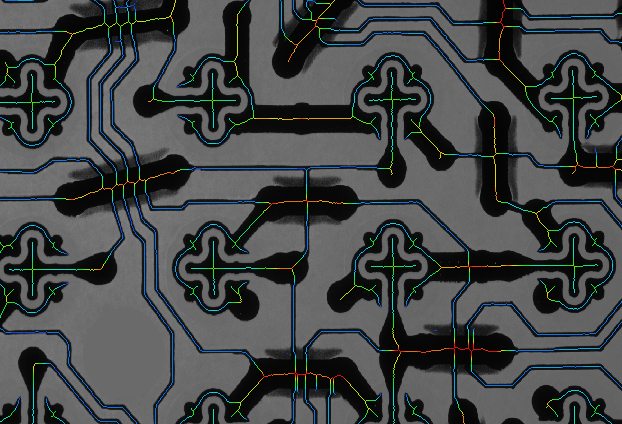

Continuous and broken edges lying within an adjustable variation tolerance produce the requested shape. The shape-finding tool returns the total number of found occurrences; for each occurrence, the tool provides the center position and score relative to the reference. It also gives the radius and scale for circles; the angle, aspect ratio, width, and scale for ellipses and rectangles; and the start and end positions as well as the length for line segments. These specialized modes are generally faster and more robust at finding the specific shapes than generic pattern recognition. Feature extraction and analysis Aurora Imaging Library provides a choice of tools for image analysis: Blob Analysis and Edge Finder. These tools are used to identify and measure basic features for determining object presence and location, and to further examine objects. The Blob Analysis tool works on segmented binary images, where objects are previously separated from the background and one another. The tool—using run-length encoding—quickly identifies blobs and can measure over 50 binary and grayscale characteristics. Measurements can be used to sort and select blobs. The tool also reconstructs and merges blobs, which is useful when working with blobs that straddle successive images.

The Edge Finder tool is well suited for scenes with changing, uneven illumination. The tool—using gradient-based and Hessian- based approaches—quickly identifies contours, as well as crests or ridges, in monochrome or color images and can measure over 50 characteristics with sub-pixel accuracy. Measurements can be used to sort and select edges. The edge extraction method can be adjusted to tailor performance.

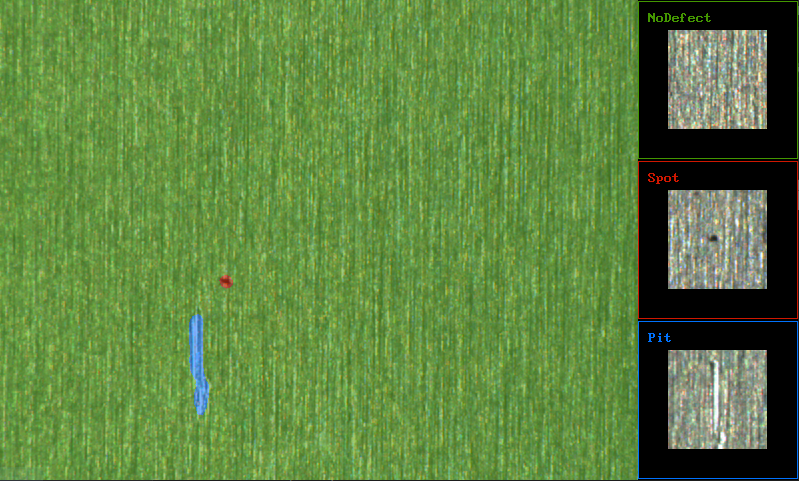

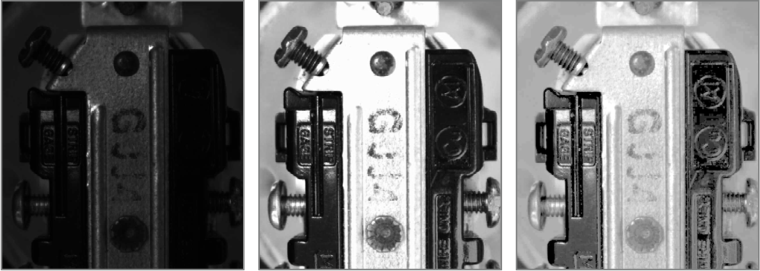

Classification tools Aurora Imaging Library includes Classification tools for automatically categorizing image content or previously extracted features using machine learning. Image-oriented classification makes use of deep learning technology—specifically the convolutional neural network (CNN) and variants—in three distinct approaches. The global approach assigns images or image regions to pre- established classes. It lends itself to identification tasks where the goal is to distinguish between similarly looking objects including those with slight imperfections. The results for each image or image region consist of the most likely class and a score for each class.

The segmentation approach generates maps indicating the pre- established class and score for all image pixels. It is appropriate for detection tasks where the objective is to determine the incidence, location, and extent of flaws or features. These features can then be further analyzed and measured using traditional tools like Blob Analysis.

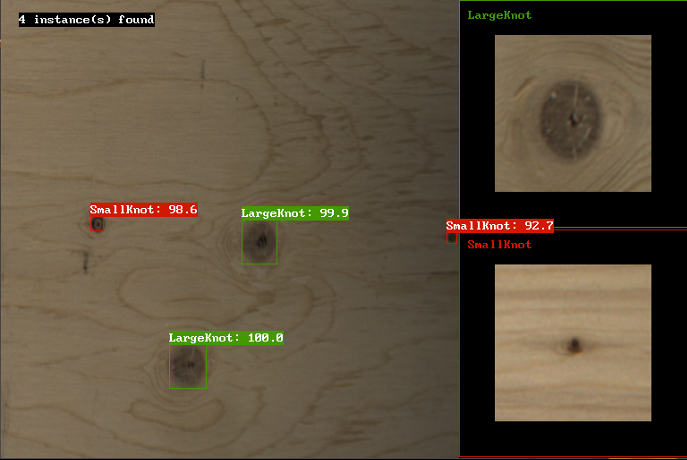

The object detection approach locates instances of pre-established classes. It is suited for inspection tasks whose goal is to find, size and count objects or features. The result for each located instance is the most likely class, the score and a bounding box including the corner coordinates, center, height and width. Image-oriented classification is particularly well-suited for inspecting images of highly textured, naturally varying, and acceptably deformed goods in complex and varying scenes.

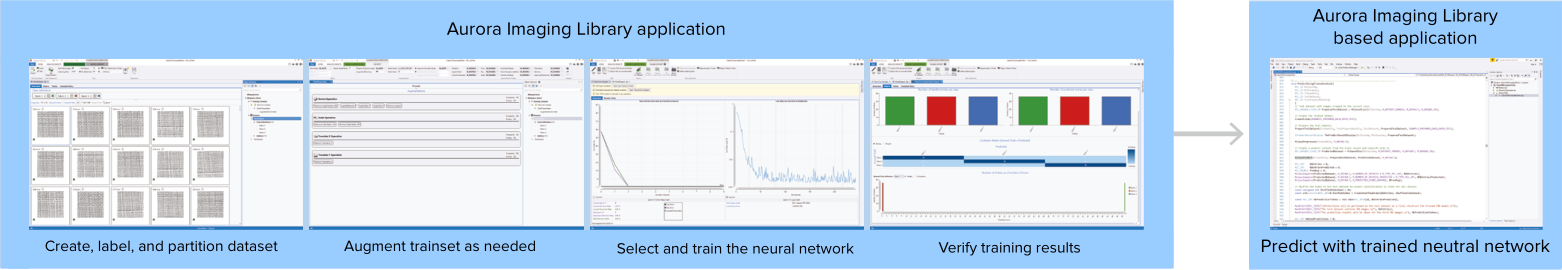

Users can opt to train a deep neural network on their own or commission Zebra to do so using previously collected images; these images must be both adequate in number and representative of the expected application conditions. Different types of training are supported, such as transfer learning and fine-tuning, all starting from one of the supplied pre-defined deep neural network architectures. Aurora Imaging Library provides the necessary infrastructure and interactive environment for building the required training dataset— including the labeling of images and augmenting the dataset with synthesized images—as well as monitoring and analyzing the training process. Aurora Imaging Library can hide the associated intricacies by automatically subdividing the training dataset, offering preset augmentations, and proposing a neural network model to start with. Training is accomplished using a NVIDIA GPU3 or x64-based CPU. Inference is performed on a CPU, Intel integrated GPU3, and NVIDIA GPU3, supporting different speed and budget requirements. Alternately, users can import a trained and compatible neural network model stored in the popular ONNX format. Feature-oriented classification uses a tree ensemble technique to categorize objects of interest from their features, expressed in numerical form, obtained from prior analysis using tools like Blob Analysis. The categorization is made by majority voting of the individual-feature decision trees. As with image-oriented classification, users can train a tree ensemble on their own using the facilities provided in Aurora Imaging Library or employ Zebra for the task.

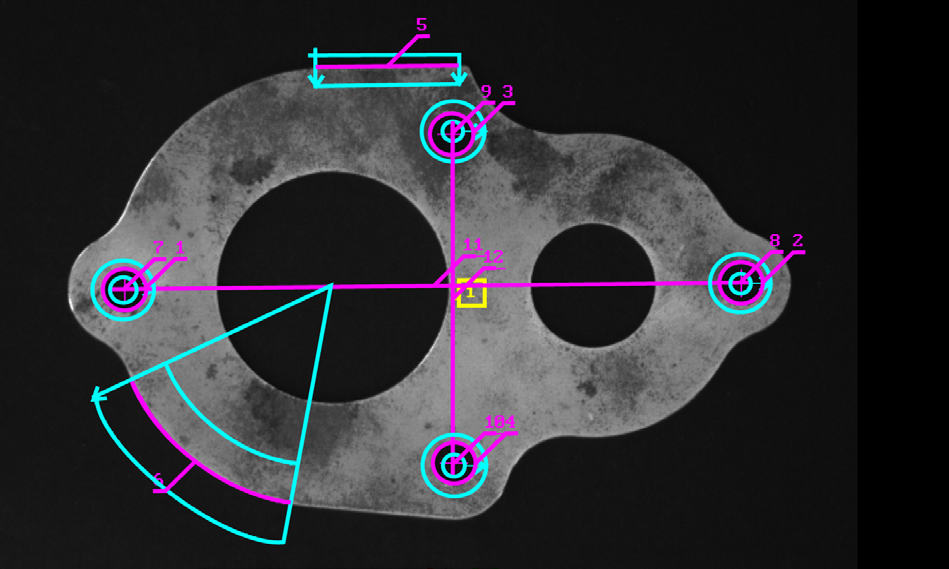

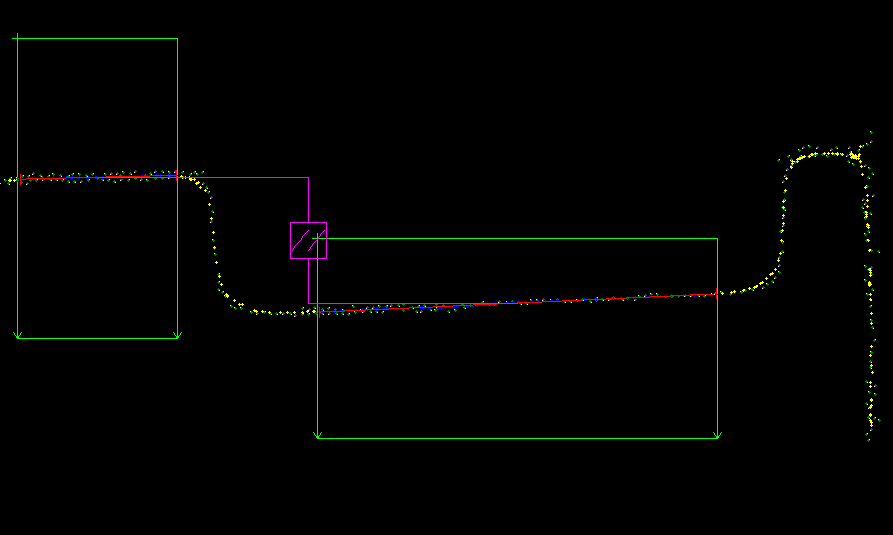

1D and 2D measurement tools Aurora Imaging Library offers three tools for measuring: Measurement, Bead inspection, and Metrology. These tools are predominantly used to assess manufacturing quality. The Measurement tool uses the projection of image intensity to very quickly locate and measure straight edges, stripes, or circles within a carefully defined rectangular region. The tool can make several 1D measurements on edges, stripes, and circles, as well as between edges, stripes, and circles.

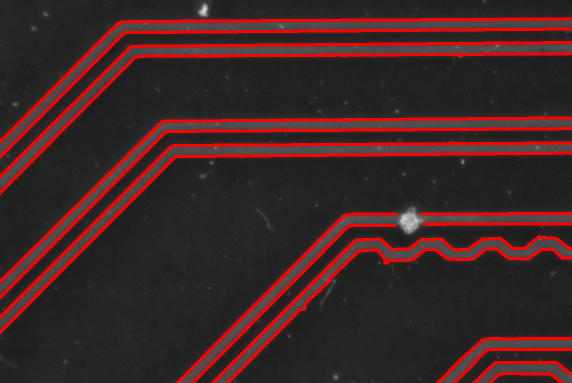

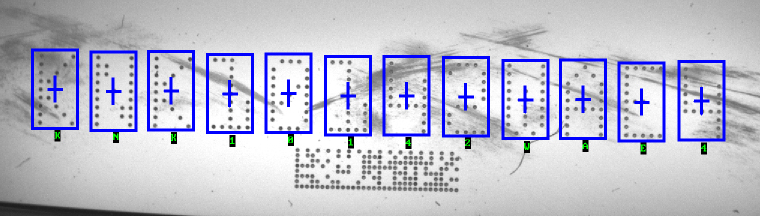

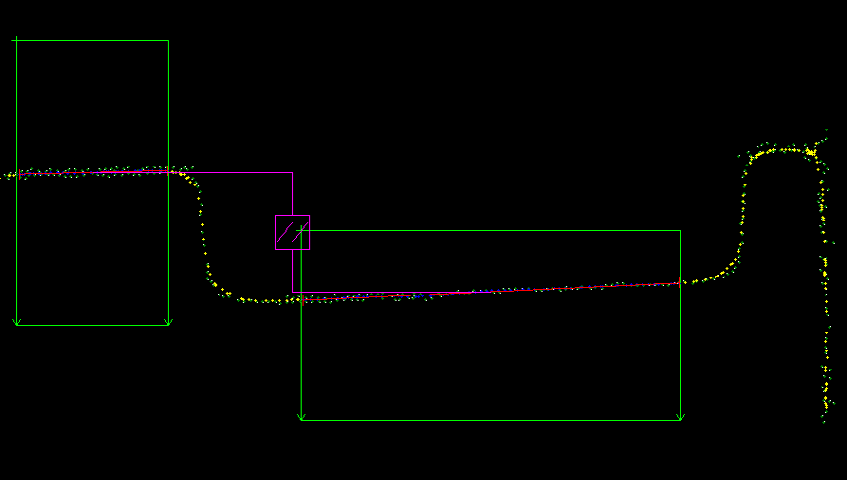

The Bead inspection tool is for inspecting material that is applied as a continuous sinuous bead, such as adhesives and sealants, or the channel where the bead will be applied. The tool identifies discrepancies in length, placement, and width, as well as discontinuities. The Bead inspection tool works by accepting a user-defined coarse path as a list of points on a reference bead and then automatically and optimally placing search boxes to form a template. The size and spacing of these search boxes can be modified to change the sampling resolution. The allowable bead width, offset, gap, and overall acceptance measure can be adjusted to meet specific inspection criteria.

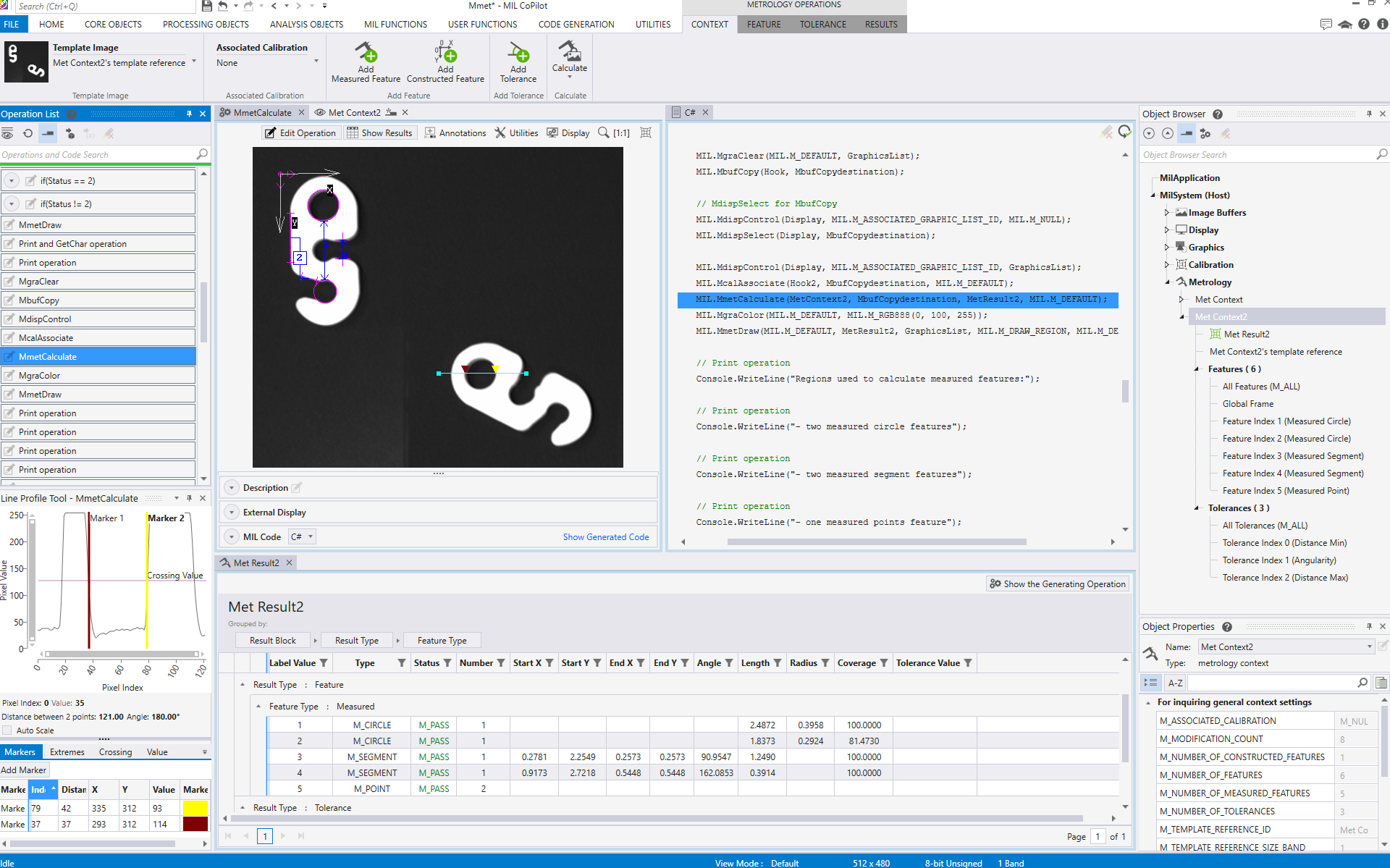

The Metrology tool is intended for 2D Geometric Dimensioning and Tolerancing (GD&T) applications. The tool quickly extracts edges within defined regions to best fit geometric features. It also supports the construction of geometric features derived from measured ones or defined mathematically. Geometric features include arcs, circles, points, and segments. The tool validates tolerances based on the dimensions, positions, and shapes of geometric features. The tool’s effectiveness is maintained when subject to uneven changes in scene illumination, which relaxes lighting requirements. The expected measured and constructed geometric features, along with the tolerances, are kept together in a template, which is easily repositioned using the results of other locating tools. The Metrology tool— along with the use of the Calibration tool—enables templates to be independent of camera position; it can also work on a 3D profile or cross-section image.

Color analysis tools Aurora Imaging Library includes tools to help identify parts, products, and items using color, assessing quality from color as well as isolating features using color. The Color Distance tool reveals the extent of color differences within and between images. The Color Projection tool separates features from an image based on their colors and can also be used to enhance color to grayscale conversion for subsequent analysis using other grayscale tools. The Color Matching tool determines the best matching color from a collection of samples for each region of interest within an image.

A color sample can be specified either interactively from an image—with the ability to mask out undesired colors—or using numerical values. A color sample can be a single color or a distribution of colors (i.e., histogram). The color-matching method and the interpretation of color differences can be manually adjusted to suit application requirements. The Color Matching tool can also match each image pixel to color samples to segment the image into appropriate elements for further analysis using other tools.

Aurora Imaging Library includes color-relative calibration to correct color appea-rance due to differences in lighting and image sensing, thus enabling consistent performance over time and across systems. Three methods are provided: Histogram-based, sample-to- sample, and global mean variance. The first method is unsupervised, only requiring that the reference and training images have similar contents. The second method is semi-supervised, requiring the correspondence between color samples on reference and training images, typically of a color chart. The third method is best suited for dealing with color drift and relies on global color distribution.

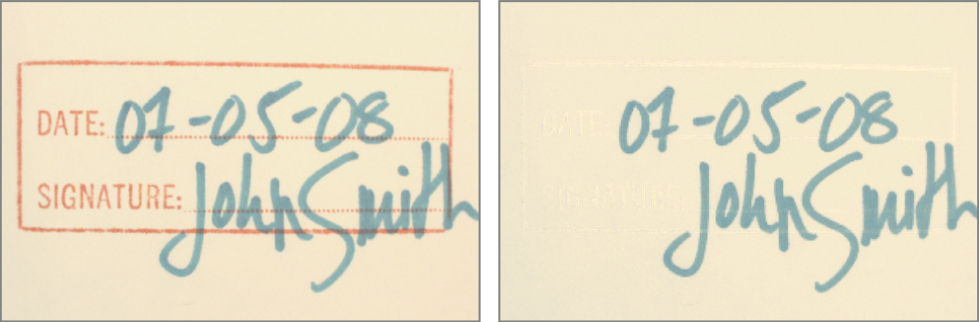

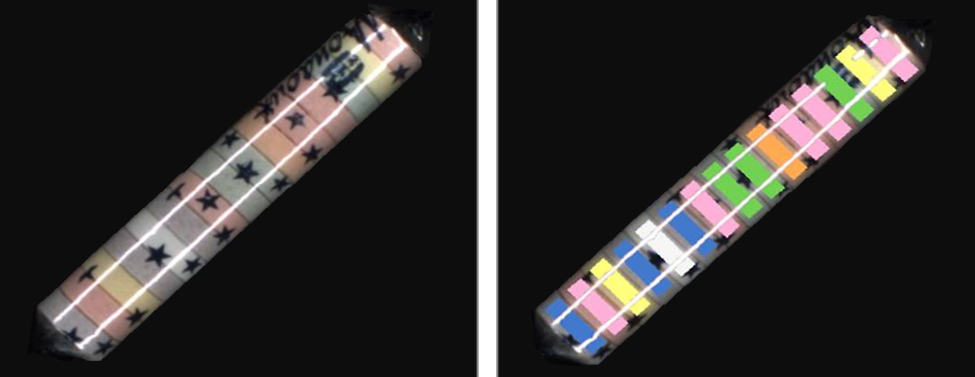

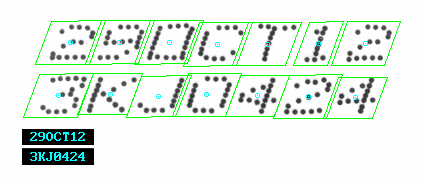

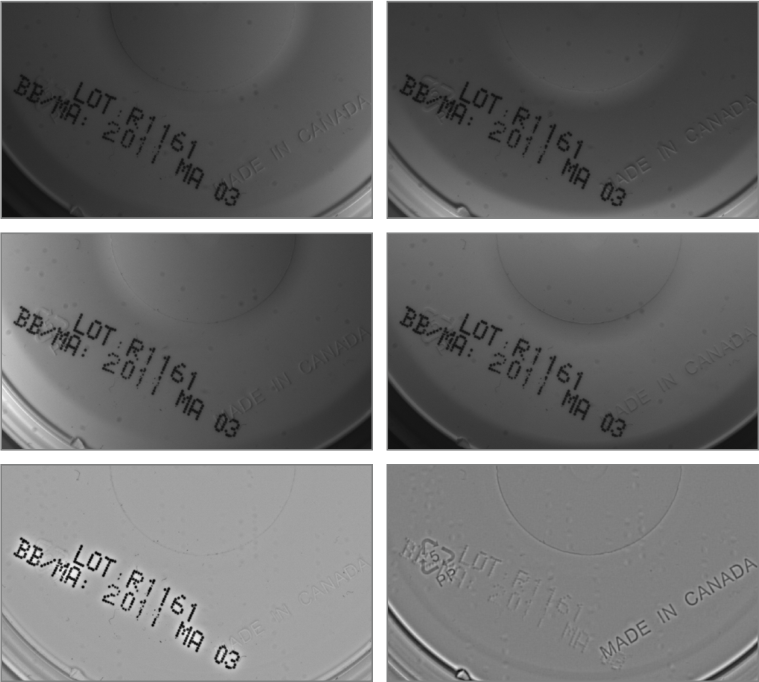

Character recognition tools Aurora Imaging Library provides three tools for character recognition: SureDotOCR, String Reader, and OCR. These tools combine to read text that is engraved, etched, marked, printed, punched, or stamped on surfaces. The SureDotOCR tool is uniquely designed for the specific challenge of reading dot-matrix text produced by inkjet printers and dot peen markers. Its use is straightforward—users simply need to specify the dot size and the dimension, but not the location, of the text region. The tool reads text of variable length3, at any angle, with varying contrast, and/or on an uneven background. It interprets distorted and touching characters as well as characters of varying scale. It also accepts constituent dots that are of varying size and touching each other. The tool recognizes punctuation marks and blank spaces. It supports the creation and editing of character fonts while including pre-defined fonts. The tool automatically handles multiple lines of text where each line can utilize a different font. The ability to set user- defined constraints, overall and at specific character positions, further enhances recognition rates. The SureDotOCR tool provides greater robustness and flexibility than case-specific techniques that convert dot-matrix characters into solid ones for reading with traditional character recognition tools.

The String Reader tool is based on a sophisticated technique that uses geometric features to quickly locate and read text made up of solid characters in images where these characters are well separated from the background and from one another. The tool handles text strings with a known or unknown number of evenly or proportionally spaced characters. It accommodates changes in character angle with respect to the string, aspect ratio, scale, and skew, as well as contrast reversal. Strings can be located across multiple lines and at a slight angle. The tool reads from multiple pre-defined TrueType™ and Postscript™) or user-defined Latin-based fonts. Also included are ready-made Latin-based unified contexts for automatic number plate recognition (ANPR) and machine print. In addition, strings can be subject to user-defined constraints, overall and at specific character positions, to further increase recognition rates. The tool is designed for ease-of-use and includes String Expert, a utility to help fine-tune settings and troubleshoot poor results.

The OCR tool utilizes a template-matching method to quickly read text with a known number of evenly spaced characters. Once calibrated, the tool reliably reads text strings with a consistent character size even if the strings themselves are at an angle. Characters can come from one of the provided OCR-A, OCR-B, MICR CMC-7, MICR E-13B, SEMI M12-92, and SEMI M13-88 fonts or a user-defined font. Strings can be subject to user-defined constraints, overall and at specific character positions, to further increase recognition rates.

1D and 2D code reading and verification tool Aurora Imaging Library offers Code Reader, a fast and dependable tool for locating and reading 1D, 2D, and composite identification marks. The tool handles rotated, scaled, and degraded codes in tough lighting conditions. It simultaneously reads multiple 1D or DataMatrix codes as well as small codes found in complex scenes. It can automatically determine a 1D code type and the optimal settings from a training set. The tool can return the orientation, position, and size of a code. In addition to reading, the tool can also be used to verify the quality of a code based on the ANSI/ AIM and ISO/IEC grading standards in conjunction with the proper hardware setup.

Registration tools Aurora Imaging Library has a tool set for handling the registration or fusion of images for various objectives. A Stitching tool is available for transforming images taken from different vantage points into a unified scene, which would be impractical or impossible to achieve using a single camera. It can also align an image to a reference for subsequent inspection. The tool contends with not only translation, but also with perspective, including scale. Alignment to a reference image or to neighboring images is performed with sub-pixel accuracy and is robust to local changes in contrast and intensity. In addition, the tool can be used for super-resolution where a sharper image is created from a series of images taken from roughly the same vantage point, which is useful for dealing with movement such as mechanical vibration.

Separate extended Depth-of-field and Depth-from-focus tools are on hand to produce, respectively, a single all-in-focus image and an index image from a series of images of a motionless scene taken at different focus points. The index image can subsequently be used to infer depth.

A Photometric Stereo tool is offered to produce an image that emphasizes surface irregularities—such as embossed or engraved features, scratches, or indentations. The image is produced from a series of images of the same scene taken with directional illumination as driven by a Quad (X2) Controller from Advanced Illumination (Ai), Light Sequence Switch (LSS) from CCS, a LED Light Manager (LLM) from Smart Vision Lights, or similar light controller.

An HDR imaging tool is also available to combine images of an identical scene, taken at different camera exposure levels, into a single image that contains a greater range of luminance.

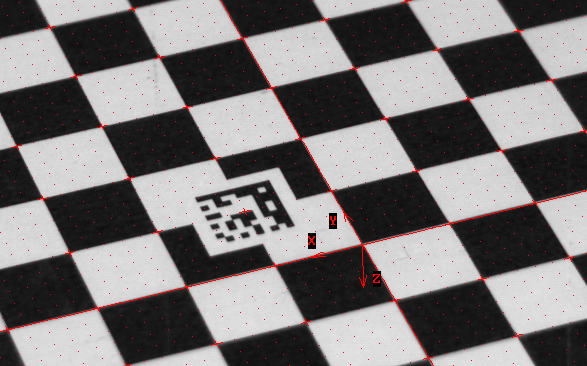

2D calibration tool Calibration is a routine requirement for imaging. Aurora Imaging Library includes a 2D Calibration tool to convert results (i.e., positions and measurements) from pixel to real-world units and vice-versa. The tool can compensate results, and even an image itself, for camera lens and perspective distortions. Calibration is achieved using an image of a grid or chessboard target, or just a list of known points. Calibration can be achieved from a partially visible target. Aurora Imaging Library also supports encoded targets that relay target characteristics—including coordinate system origin and axes—to further automate the calibration process.

Image processing primitives tools A professional imaging toolkit must include a complete set of operators for enhancing and transforming images, and for retrieving statistics in preparation for ensuing analysis. Aurora Imaging Library includes an extensive list of Image Processing tools with fast operators for arithmetic, Bayer interpolation, color space conversion, spatial and temporal filtering, geometric transformations, histogram, logic, lookup table (LUT) mapping, morphology, orientation, projection, segmentation, statistics, thresholding, and wavelets.

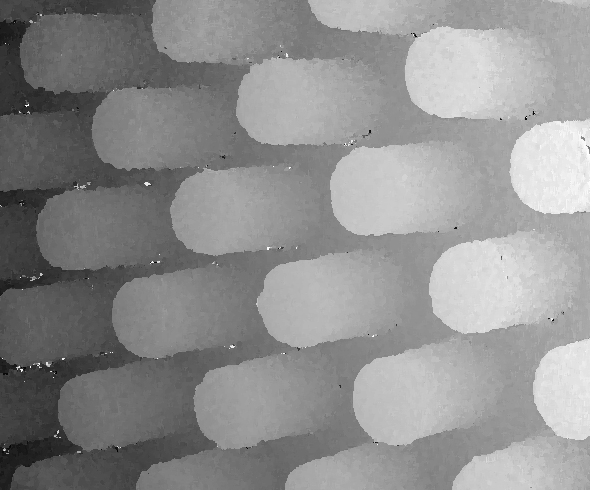

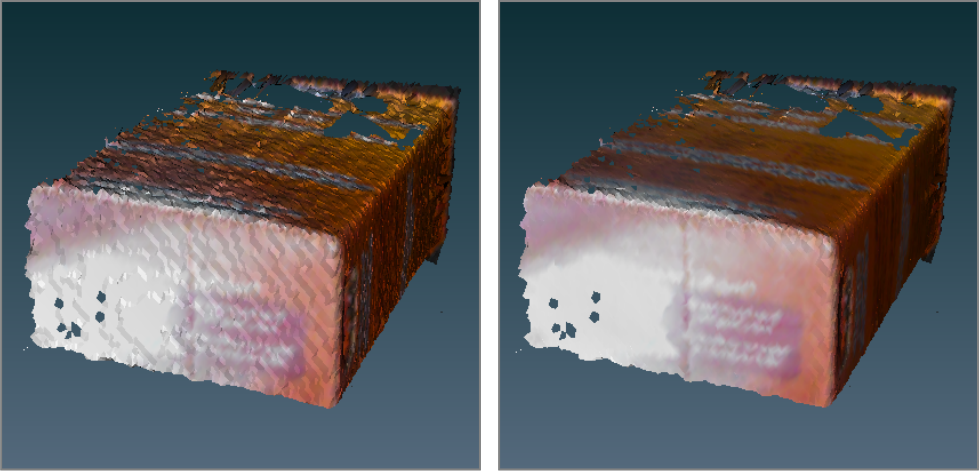

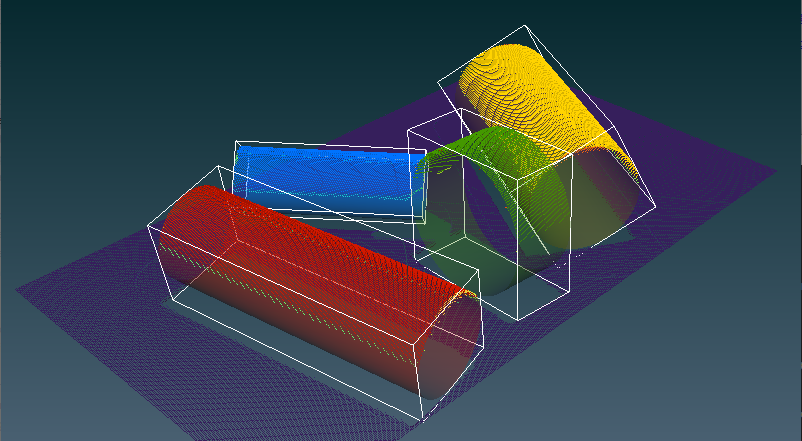

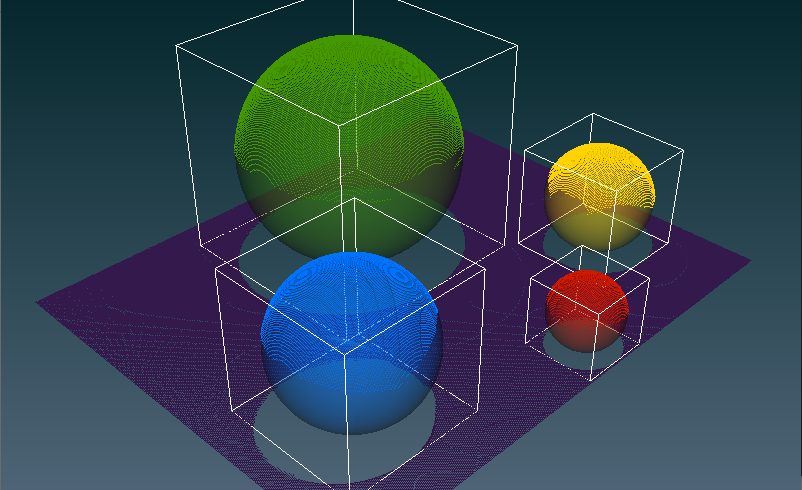

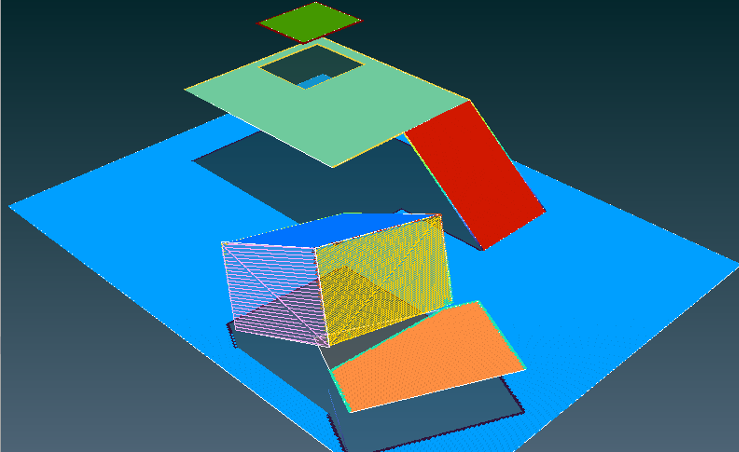

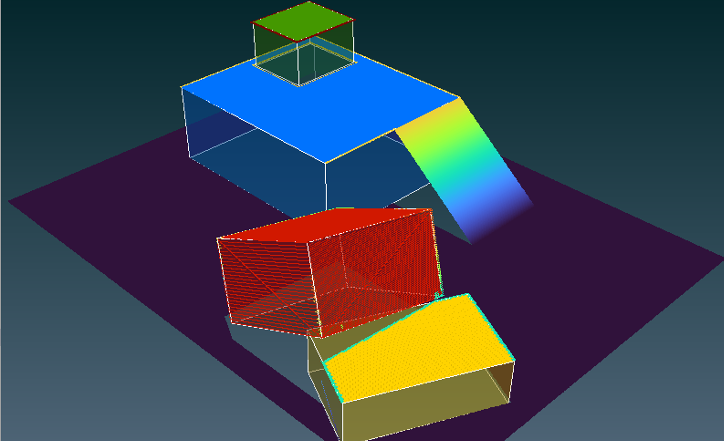

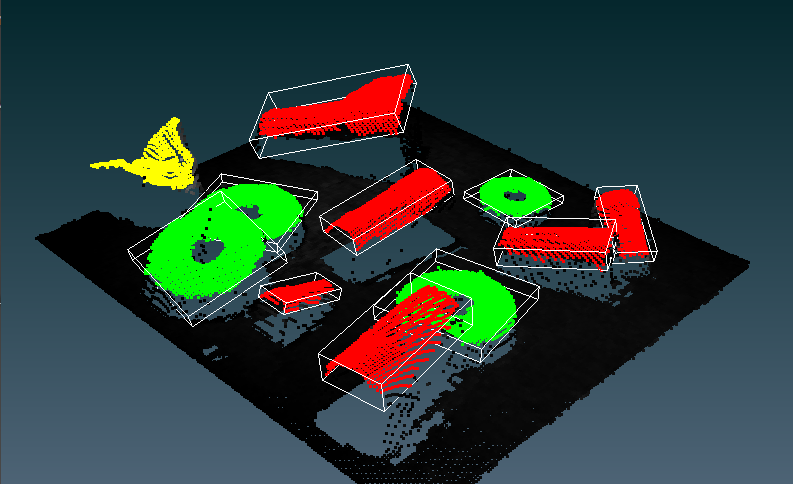

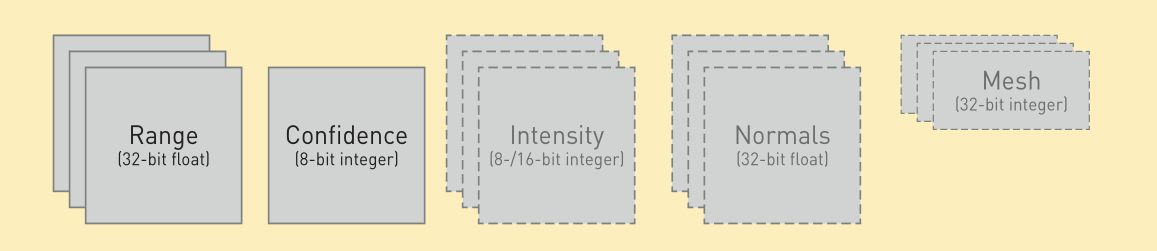

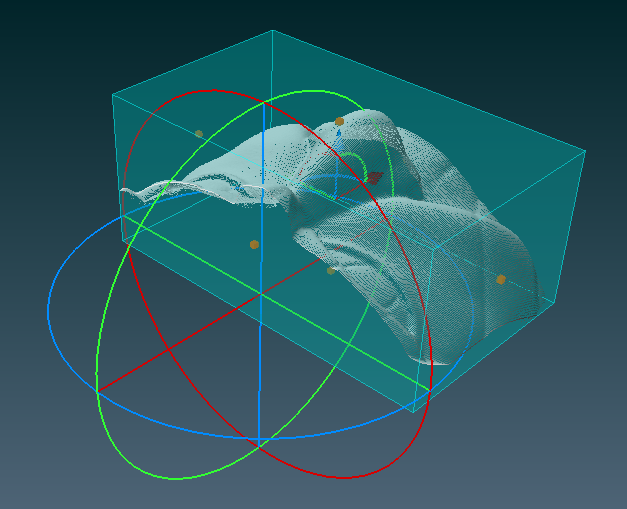

Image compression and video encoding tool Aurora Imaging Library provides Image Compression and Video Encoding tools for optimizing storage and transmission requirements. Lossy and lossless JPEG and JPEG2000 image compression and H.264 video encoding are supported. H.264 support can leverage Intel Quick Sync Video technology for encoding multiple high-definition video streams in real-time. Aurora Imaging Library saves and loads compressed images individually using the JPG and JP2 file formats or as a sequence using the AVI file format. The H.264 elementary stream can be stored in and recovered from a MP4 format file. Compression and encoding settings can be adjusted for different size versus quality. Tools fully optimized for speed Aurora Imaging Library image processing and analysis operations are optimized by Zebra to take full advantage of Intel SIMD instructions— including AVX2 and AVX-512—as well as multi-core CPU and multi-CPU system architectures, to perform at top speed. Aurora Imaging Library automatically dispatches operations to the type and number of processor cores needed to achieve maximum performance. Alternatively, it gives programmers control over the type and number of processor cores assigned to perform a given operation. In addition, Aurora Imaging Library is able to offload from the host CPU and even accelerate certain image processing operations when used with Zebra processing hardware with FPGA technology. 3D Vision Tools: Situations sometime arise where classical 2D vision techniques are unable to perform the required localization, recognition, inspection, or measurement tasks. These circumstances range from an inability to obtain the necessary consistent contrast from conventional illumination to needing the pose of an object with six degrees of freedom. This is where 3D vision tools step in—whether alone or in combination with 2D vision tools—to carry out the job. Aurora Imaging Library has a rich set of tools for performing 3D processing and analysis. These tools work on the 3D data produced by profile and snapshot sensors as well as stereo and time-of-flight (ToF) cameras. The 3D data supported by Aurora Imaging Library can also come from a Stanford Polygon Format (PLY) or stereolithography (STL) file. 3D processing tools The 3D processing tools in Aurora Imaging Library operate on— and in between—point clouds, depth maps, and/or elementary objects. The latter can be a box, cylinder, line, plane, or sphere. Operations on a point cloud include filtering (denoising)3, rotation, scaling, translation, cropping/masking, re-sampling, outlier removal, and meshing into surfaces; computing normal vectors and surface statistics; projecting to a depth map; and extracting a cross-section. Operations on a depth map include addition, subtraction/distance, and minimum/maximum; filling gaps (i.e., caused by invalid or missing data); and extracting a profile. Additional operations on both a point cloud and depth map include establishing a bounding box, computing the centroid, counting the number of points, and calculating the distances to the nearest neighboring point.

A depth map can subsequently be analyzed using Aurora Imaging Library’s 2D vision tools like Pattern Recognition—without being affected by illumination variations or surface texture—and Character Recognition, when the alphanumeric code to read protrudes from, but has the same color as, the background. A profile or cross section can be analyzed using Metrology.

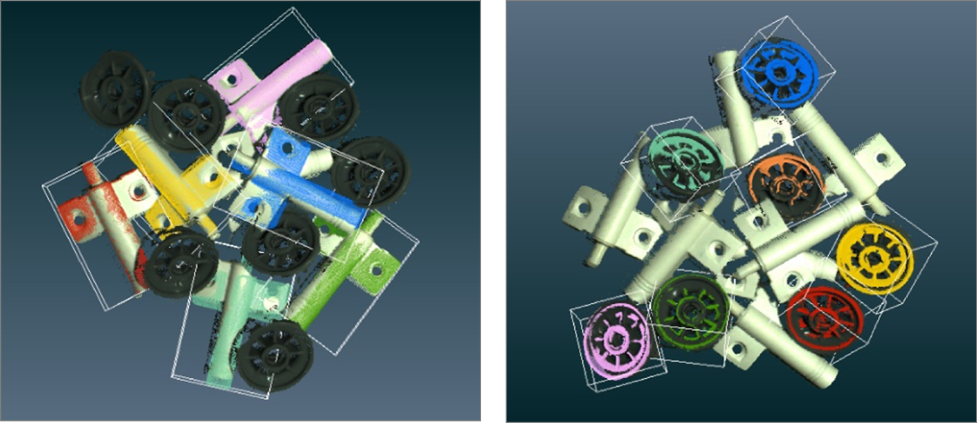

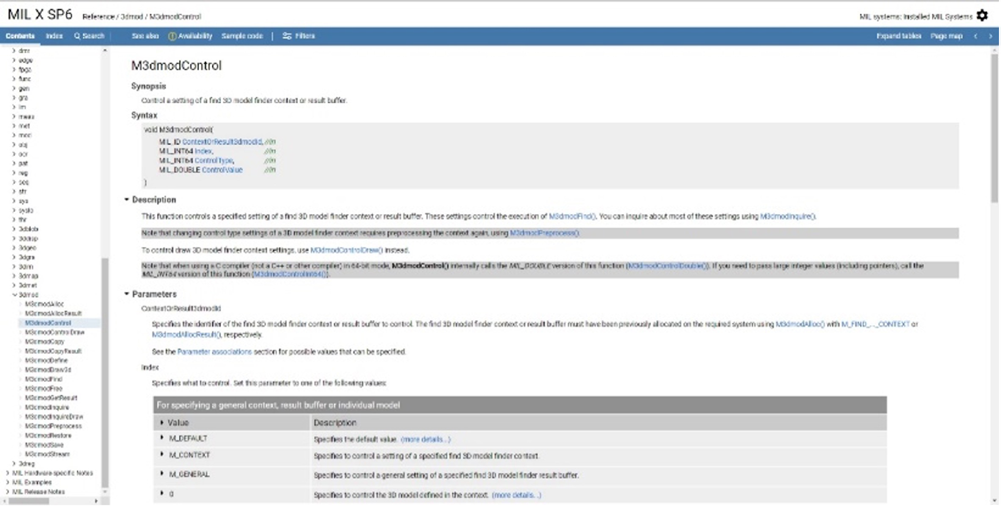

3D surface matcher Aurora Imaging Library includes a tool for finding a surface model– including multiple occurrences–at wide-ranging orientations in a point cloud. A surface model is defined from a point cloud obtained from a 3D camera or sensor, or from a CAD (PLY or STL) file. Various controls are provided to influence the search accuracy, robustness, and speed. Search results include the number of occurrences found and for each occurrence, the score, error, number of points, center coordinates, and estimated pose.

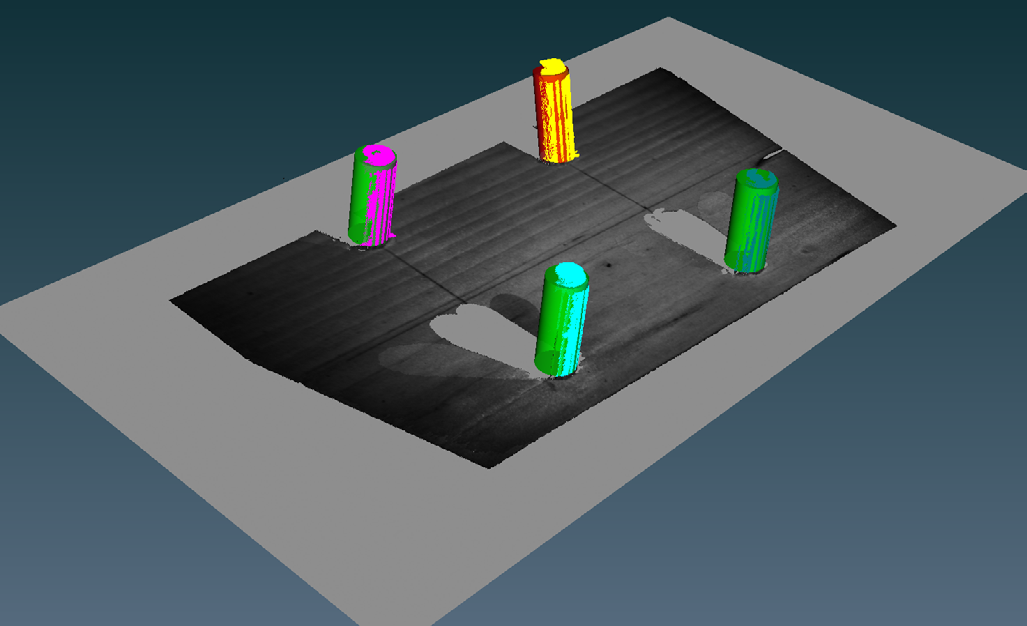

3D shape finding tool Aurora Imaging Library provides a tool for locating specific shapes – cylinders, (hemi)spheres, rectangular planes, and boxes – in a point cloud. The shape to find is specified either numerically or from one or two previously defined elementary objects.. Several settings are provided to tune the finding process accuracy, robustness, and speed. Results include the number of occurrences found and for each occurrence, the score, error, number of points, and center coordinates. Additional results include the radius for spheres and cylinders, length(s) for cylinders, rectangular planes, and boxes, central axis and bases for cylinders, normal unit vector for rectangular planes, and number of visible faces for boxes.

3D blob analysis tool The 3D Blob Analysis tool in Aurora Imaging Library is available to locate and inspect objects in a point cloud. It makes it possible to segment a point cloud into blobs, calculate numerous blob features, filter and sort blobs by features, as well as select and combine blobs. Multiple segmentation methods can be selected: point distance, color distance, or similarity of normal vectors.

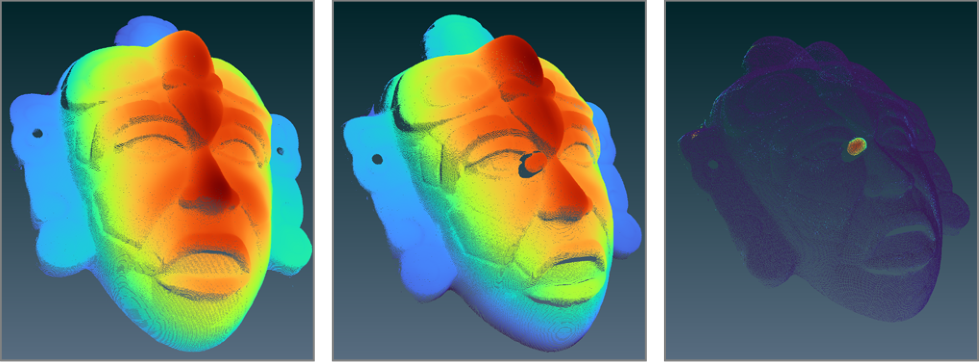

3D metrology tools Aurora Imaging Library includes a toolset for 3D Metrology. Within this toolset, one tool fits a point cloud or depth map to a cylinder, line, plane, or sphere. Additional tools compute various distances and statistics between point clouds, depth maps, and fitted or user- defined elementary objects. Another tool is available to determine volume in a variety of ways.

3D registration tool An additional 3D Registration tool in Aurora Imaging Library establishes the fine alignment of two or more point clouds and merges them together if required. This tool provides the means to perform high-accuracy comparative analysis between a 3D model and target, do full object reconstruction from multiple neighboring 3D scans, and align the data from multiple 3D camera or sensors.

3D profilometry tools Aurora Imaging Library also contains tools for 3D Profilometry using a discrete sheet-of-light source (i.e., laser) and a conventional 2D camera. A calculator is included to establish the camera, lens, and alignment needed to achieve the desired measurement resolution and range. Aurora Imaging Library provides straightforward calibration methods and associated tools to produce a point cloud or depth map. The calibration carried out in Aurora Imaging Library can combine multiple sheet-of-light sources and 2D camera pairs to work as one, thus avoiding the need for post alignment and merger. Such configurations are useful to limit occlusion, increase scan density, and image the whole volume of an object. Moreover, Aurora Imaging Library makes use of a unique derivative-based algorithm for beam extraction or peak detection, which is both more accurate and robust than traditional ones based on the center of gravity.

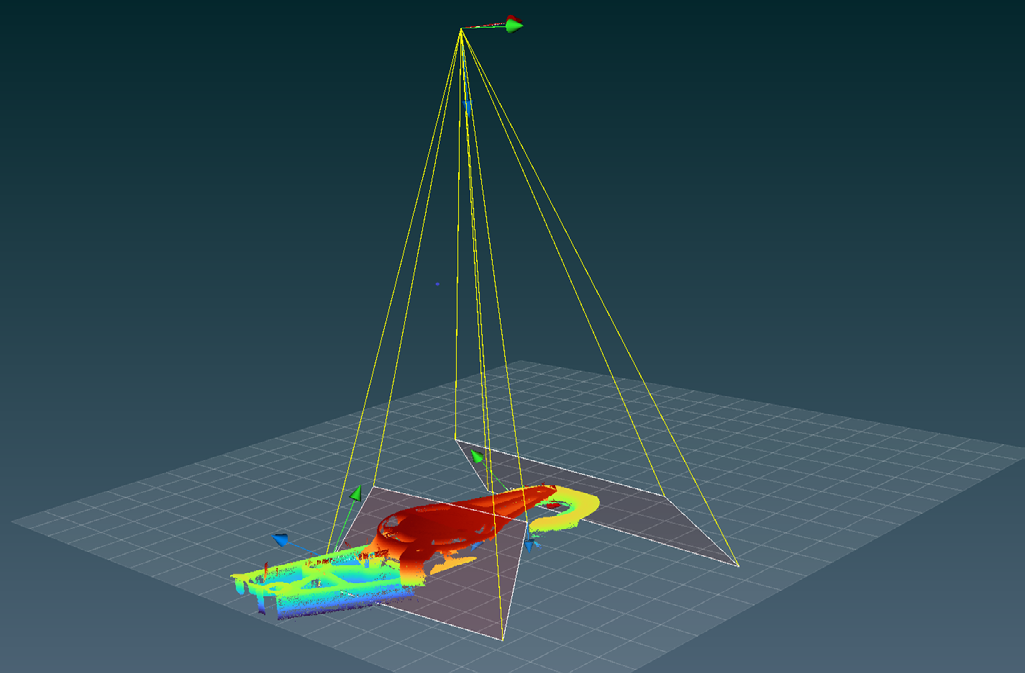

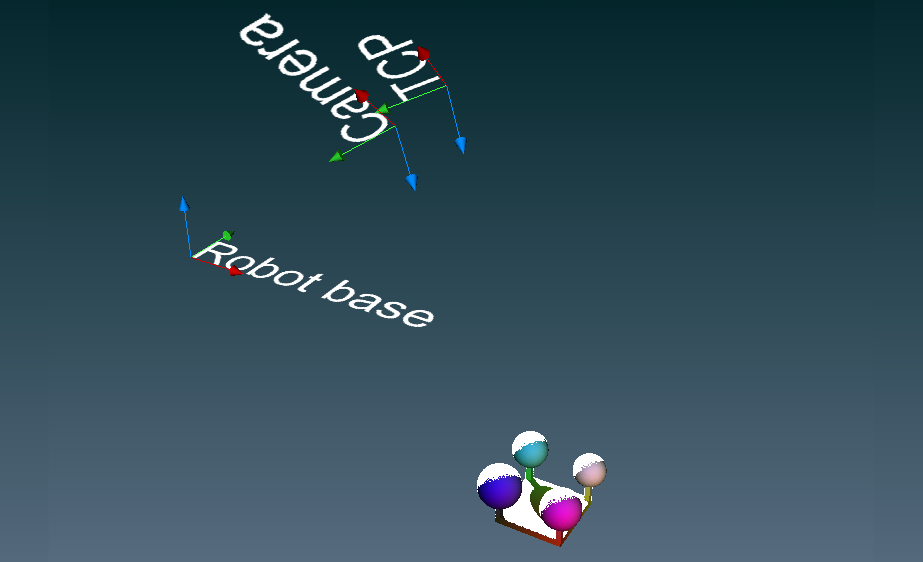

3D calibration tools In addition, Aurora Imaging Library provides the necessary calibration services to position and orient a camera or sensor in 3D space and determine the coordinate transformation matrices between it and the rest of a robotic cell. The camera or sensor can either be fixed overhead and/or to the side of the robotic cell (i.e., eye-to-hand calibration) or mounted at the end of the robot arm (i.e., eye-in-hand calibration). The calibration enables to relate what a camera or sensor sees—the object pose established using other analysis Aurora Imaging Library tools—to a robot controller for guidance.

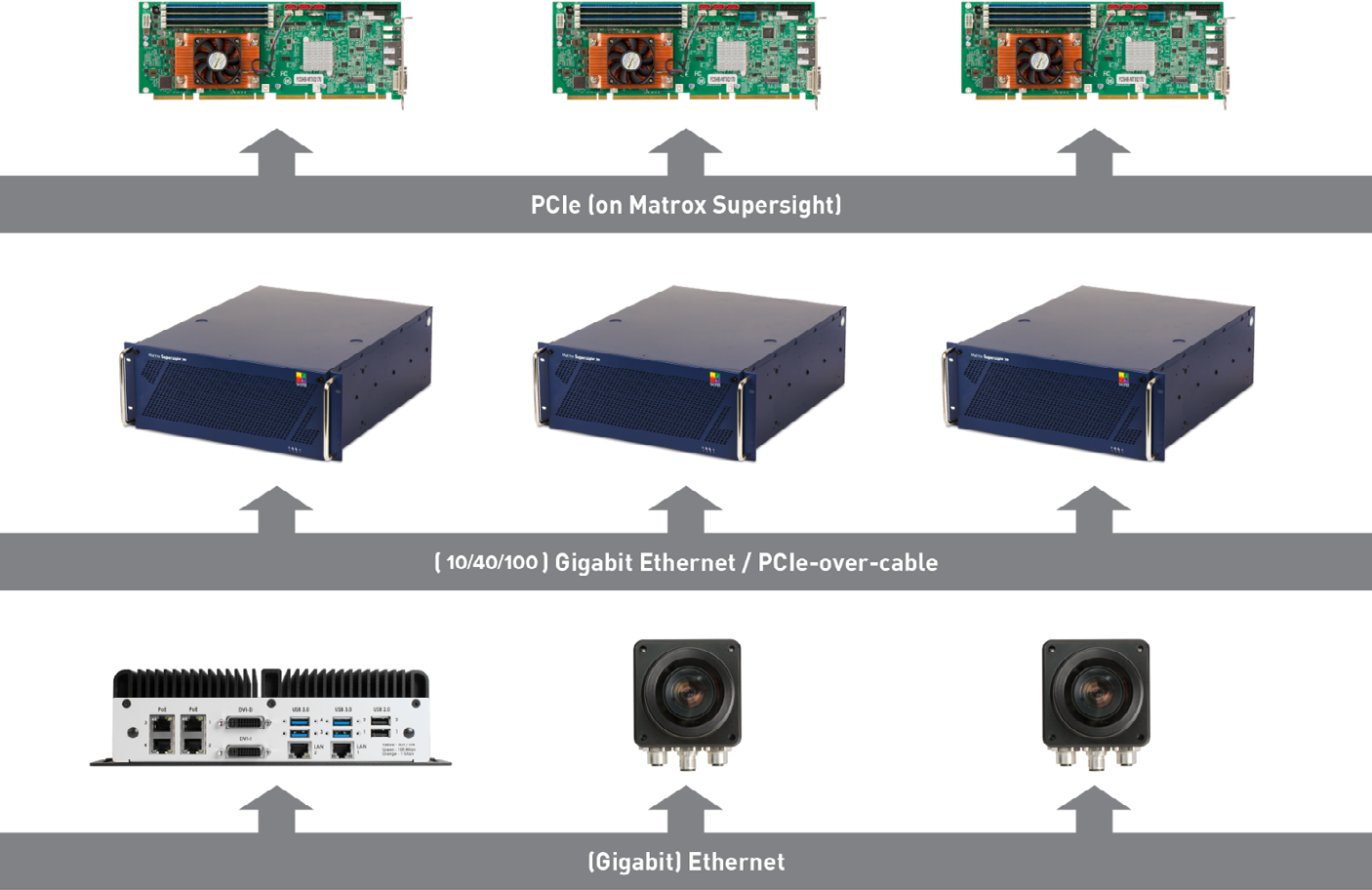

Distributed Aurora Imaging Library Interface: Coordinate and scale performance outside the box Aurora Imaging Library can remotely access and control image capture, processing, analysis, display, and archiving. Distributed Aurora Imaging Library functionality provides the means to scale an application beyond a single computer and make the most of modern-day HPC clusters for machine vision applications. The technology can also be used to control and monitor several PCs and smart cameras deployed on a factory floor. Distributed Aurora Imaging Library simplifies distributed application development by providing a seamless method to dispatch Aurora Imaging Library (and custom) commands; transfer data; send and receive event notifications (including errors); mirror threads; and perform function callback across systems. It offers low overheads and efficient bandwidth usage, even allowing agent nodes to interact with one another without involving the director node. Distributed Aurora Imaging Library also gives developers the means to implement load balancing and failure recovery. It includes a monitoring mode for supporting the connection to an already running Aurora Imaging Library application.

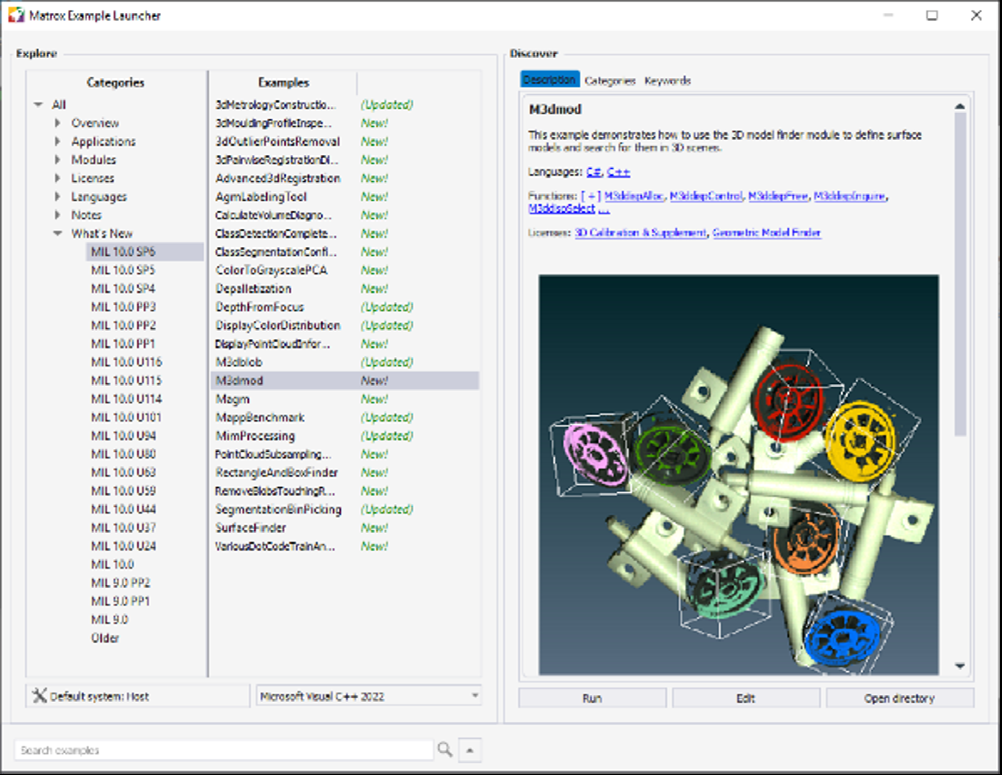

Utilities: Aurora Imaging Library CoPilot interactive environment Included with Aurora Imaging Library is Aurora Imaging Library CoPilot, an interactive environment to facilitate and accelerate the evaluation and prototyping of an application. This includes configuring the settings or context of Aurora Imaging Library vision tools. The same environment can also initiate—and therefore shorten—the application development process through the generation of Aurora Imaging Library program code. Running on 64-bit Windows, Aurora Imaging Library CoPilot provides interactive access to Aurora Imaging Library processing and analysis operations via a familiar contextual ribbon menu design. It includes various utilities to study images and help determine the best analysis tools and settings for a given project. Also available are utilities to generate a custom encoded chessboard calibration target and edit images. Applied operations are recorded in an Operation List, which can be edited at any time, and can also take the form of an external script. An Object Browser keeps track of Aurora Imaging Library objects created during a session and gives convenient access to these at any moment. Non-image results are presented in tabular form and a table entry can be identified directly on the image. The annotation of results onto an image is also configurable. Aurora Imaging Library CoPilot presents dedicated workspaces for training one of the supplied deep learning neural networks for Classification. These workspaces feature a simplified user interface that reveals only the functionality needed to accomplish the training task like an image label mask editor. Another specialized workspace is provided to batch-process images from an input to an output folder. Once an operation sequence is established, it can be converted into functional program code in any language supported by Aurora Imaging Library. The program code can take the form of a command-line executable or dynamic link library (DLL); this can be packaged as a Visual Studio project, which in turn can be built without leaving Aurora Imaging Library CoPilot. All work carried out in a session is saved as a workspace for future reference and sharing with colleagues.

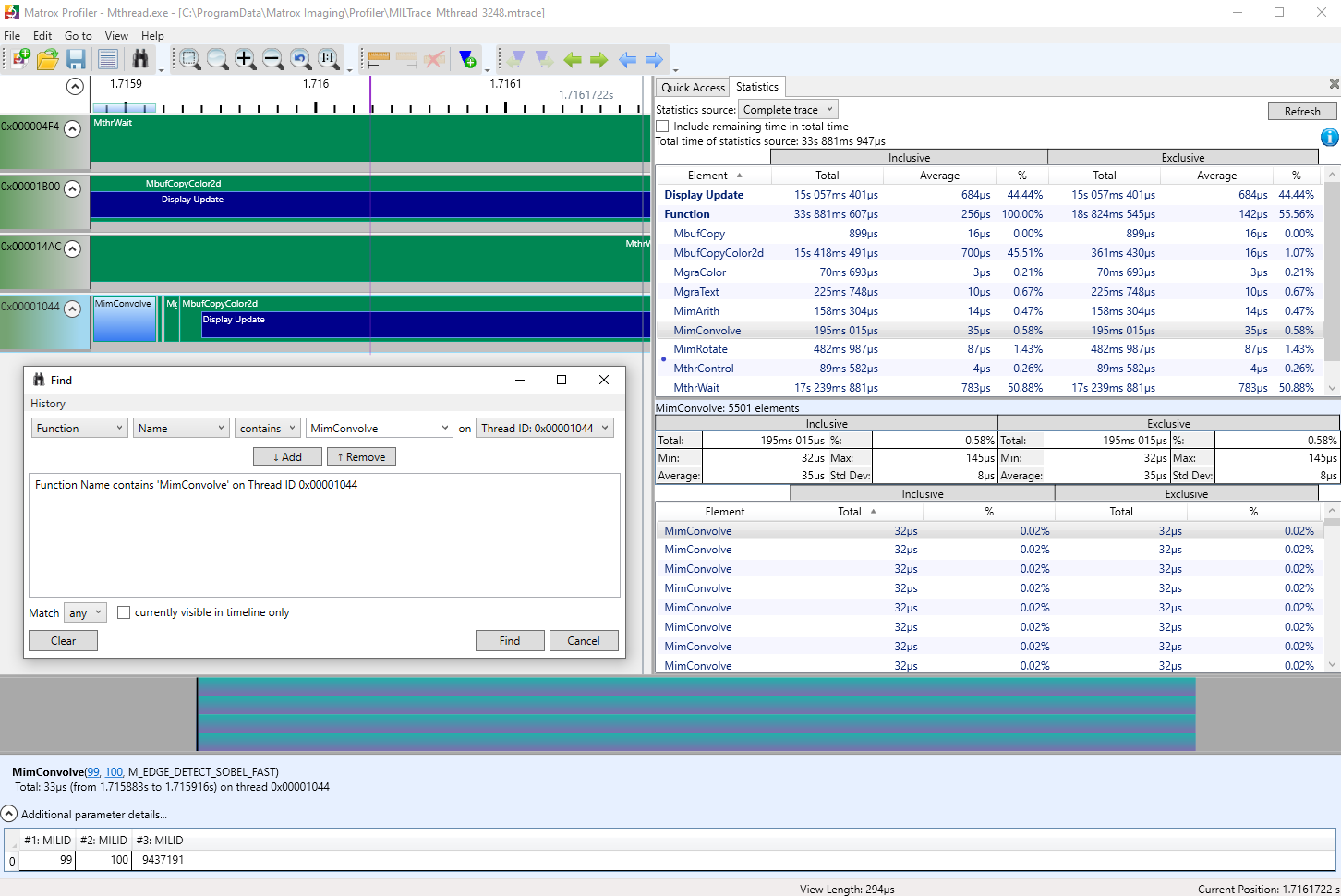

Aurora Profiler Aurora Profiler is a Windows-based utility to post-analyze the execution of a multi-threaded application for performance bottlenecks and synchronization issues. It presents the function calls made over time per application thread on a navigable timeline. Aurora Profiler allows the searching for, and selecting of, specific function calls to see their parameters and execution times. It computes statistics on execution times and presents these on a per function basis. Aurora Profiler tracks not only Aurora Imaging Library functions but also suitably tagged user functions. Function tracing can be disabled altogether to safeguard the inner working of a deployed application.

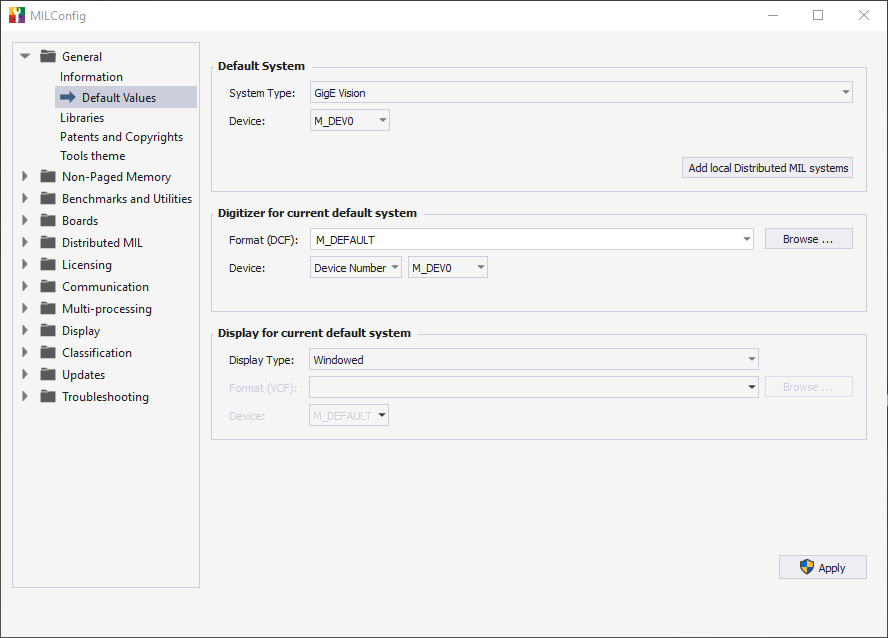

Development Features: Complete application development environment In addition to image processing, analysis, and archiving tools, Aurora Imaging Library includes image capture, annotation, and display functions, which form a cohesive API. The API and accompanying utilities are recognized by the large installed base of users for facilitating and accelerating application development. Portable API The Aurora Imaging Library C/C++ API is not only intuitive and straightforward to use but it is also portable. It allows applications to be easily moved from one supported video interface or operating system to another, providing platform flexibility and protecting the original development investment. .NET development Included in Aurora Imaging Library is a low-overhead API layer for developing Windows applications using .NET written in Visual C# code. JIT compilation and scripting Aurora Imaging Library supports C# JIT compilation and CPython scripting, facilitating experimentation and prototyping. Such code can even be executed from within a compiled Aurora Imaging Library-based application, providing a simpler way to tailor an already-deployed application. Simplified platform management With Aurora Imaging Library, a developer does not require in-depth knowledge of the underlying platform. Aurora Imaging Library is designed to deal with the specifics of each platform and provide simplified management (e.g., hardware detection, initialization, and buffer copy). Aurora Imaging Library gives developers direct access to certain platform resources such as the physical address of a buffer. The software also includes debugging services (e.g., function parameter checking, tracing, and error reporting), as well as configuration and diagnostic tools.

Designed for multi-tasking Aurora Imaging Library supports multi-processing and multi- tasking programming models, namely, multiple Aurora Imaging Library applications not sharing Aurora Imaging Library data or a single Aurora Imaging Library application with multiple threads sharing Aurora Imaging Library data. It provides mechanisms to access shared Aurora Imaging Library data and ensure that multiple threads using the same Aurora Imaging Library resources do not interfere with each other. Aurora Imaging Library also offers platform-independent thread management for enhancing application portability. Buffers and containers Aurora Imaging Library manipulates data stored in buffers, such as monochrome images arranged in 1-, 8-, 16-, and 32-bit integer formats, as well as 32-bit floating point formats. It also handles color images laid out in packed or planar RGB/YUV formats. Commands for efficiently converting between buffer types are included. Aurora Imaging Library additionally operates on containers, which combine related buffers into a cohesive whole. Containers simplify working with multi-component data such as point clouds for 3D processing and analysis as well as display.

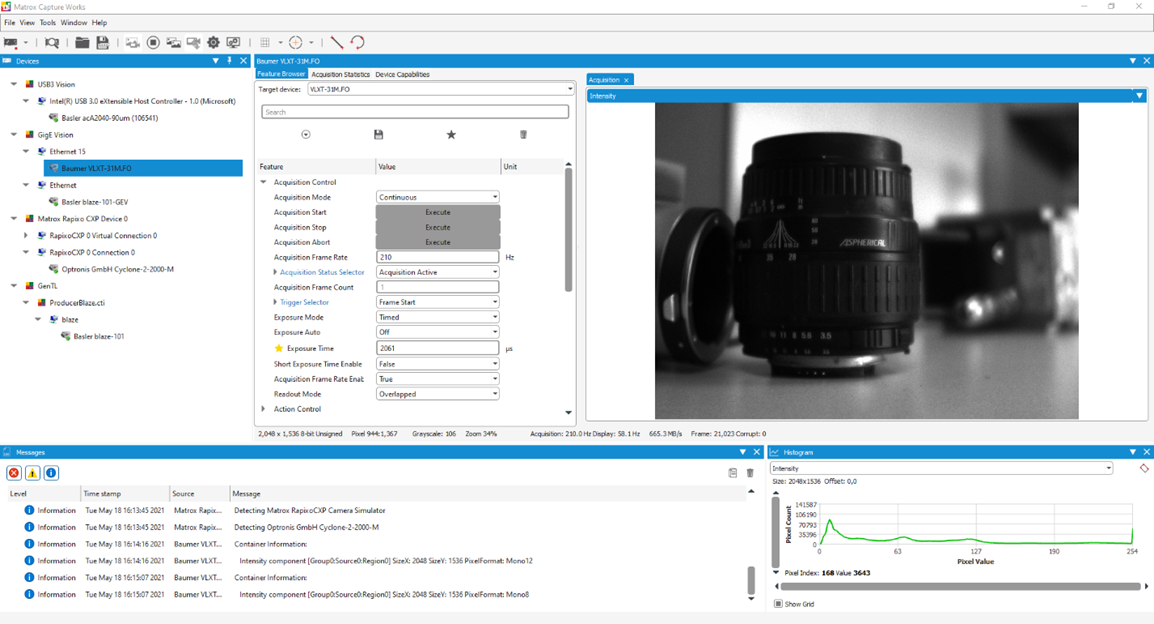

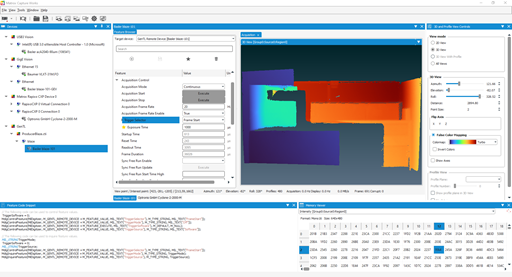

Saving and loading data Aurora Imaging Library supports the saving and loading of individual images, image sequences, and data containers to and from disks. Supported file formats are AVI (Audio Video Interleave), BMP (bitmap), JPG (JPEG), JP2 (JPEG2000), MP4 (MPEG-4 Part 14), PLY, MIM (native), PNG, STL, and TIF (TIFF), as well as a raw format. Industrial and robot communication Aurora Imaging Library lets applications interact directly with automation controllers using the CC-Link IE Field Basic, EtherNet/ IP™, Modbus®, and PROFINET® (with appropriate Zebra I/O card, smart camera, or vision controller) industrial communication protocols. It also supports native communication with robot controllers from ABB, DENSO, EPSON, FANUC, KUKA, and Stäubli. WebSocket access Aurora Imaging Library allows an application to publish Aurora Imaging Library object data for access from a browser or another standalone application using the HTML-5 WebSocket communication protocol. It uses a client-server architecture where the server is the Aurora Imaging Library-based application and the client is a JavaScript program running in a browser or a standalone application. The functionality can be used locally on the same device running the Aurora Imaging Library-based application or remotely on another device that does not have Aurora Imaging Library installed on it. The API extension supports client-side programming in JavaScript or C/C++. The Aurora Imaging Library objects supported are the buffer and display ones. The functionality serves to view and interact with a Aurora Imaging Library display (i.e., pan, scroll, zoom, etc.). Flexible and dependable data capture There are many ways for an imaging system to capture data: Analog, Camera Link, CoaXPress, DisplayPort, GenTL, GigE Vision, HDMI, SDI, and USB3 Vision. Aurora Imaging Library supports all these interfaces either directly or through Zebra or third-party hardware. Aurora Imaging Library works with images captured from virtually any type of color or monochrome source including standard, high-resolution, high-rate, frame-on-demand cameras, line scanners, 3D sensors, slow scan, and custom-designed devices. For greater determinism and the fastest response, Aurora Imaging Library provides multi-buffered data capture control performed in the operating system’s kernel mode. Data capture is secured for frame rates measured in the thousands per second even when the host CPU is heavily loaded with tasks such as HMI management, networking, and archiving to disk. The multi-buffered mechanism supports callback functions for simultaneous capture and processing even when the processing time occasionally exceeds the capture time. Aurora Capture Works Aurora Imaging Library includes Aurora Capture Works, a utility for verifying the connection to one or more GenICam™-based cameras or 3D sensors and testing acquisition from these. Aurora Capture Works can obtain CoaXPress, GenTL, GigE Vision, and USB3 Vision device information; collect and present acquisition statistics; and provide access to acquisition properties. The built-in Feature Browser allows the user to configure and control devices with ease. Device settings can be saved for future reuse. Captured data from multiple devices can be displayed efficiently in 2D and 3D where applicable, with the option to view histograms, 3D and profile data, real-time pixel profiles, memory values, Aurora Imaging Library code snippets, and much more. Aurora Capture Works can also be used to apply firmware updates to devices provided these follow the GenICam FWUpdate standard. Another utility, Aurora Intellicam, is provided to set up and test acquisition from cameras with analog, Camera Link, and other digital interfaces.

Simplified 2D image display Aurora Imaging Library provides transparent 2D image display management with automatic tracking and updating of image display windows at live video rates. Aurora Imaging Library also allows for live image display in a user-specified window. Display of multiple video streams using multiple independent windows or a single mosaic window is also supported. Moreover, Aurora Imaging Library provides non-destructive graphics overlay, suppression of tearing artifacts, and filling the display area at live video rates. All these features are performed with little or no host CPU intervention when using appropriate graphics hardware. Aurora Imaging Library also supports multi-screen display configurations that are in an extended desktop mode (i.e., desktop across multiple monitors), exclusive mode (i.e., monitor not showing desktop but dedicated to Aurora Imaging Library display), or a combination.

Graphics, regions, and fixtures Aurora Imaging Library provides a feature-rich graphics facility to annotate images and define regions of operation. This capability is used by the Aurora Imaging Library analysis tools to draw settings and results onto an image. It is also available to the programmer for creating application-specific image annotations. The graphics facility supports different shapes—dot, line, polyline, polygon, arc, and rectangle—and text with selectable font. It takes image calibration into account, specifically the unit, reference coordinate system, and applicable transformations. The graphics scale smoothly when zooming to sub-pixel. An interactive mode is available to easily allow developers to provide user editing of graphics, and the ability to add, move, resize, and rotate graphic elements. Moreover, the application can hook to interactivity-related events to automatically initiate underlying actions. The graphics facility can further be used to define regions to guide or confine subsequent Aurora Imaging Library analysis operations. Regions can also be repositioned automatically by tying their reference coordinate system to the positional results of a Aurora Imaging Library analysis operation.

Native 3D display Aurora Imaging Library can natively display point clouds. A 3D display can be panned, tilted, and zoomed in all directions. Visual guides appear to help orient the view. In addition to showing individual points, a 3D display can be supplemented with geometric shapes (i.e., box, cylinder, line, plane, point, sphere, text, coordinate system axes, grid (on reference plane), and orientation box). Aurora Imaging Library allows control over appearance (solid or wireframe), opacity, color (including point cloud colorization using jet or turbo lookup tables), visibility, and thickness. The facility is used by the various 3D processing and analysis tools to display results. A box shape can be user-editable, useful for interactively specifying a region to view and subsequently work with.

Application deployment Aurora Imaging Library offers a flexible licensing model for application deployment. Only the components required to run the application need to be licensed. License fulfillment is achieved using a pre-programmed dongle or an activation code tied to Zebra hardware (i.e., smart camera, vision controller, I/O card, frame grabber, or dongle). Some components are pre-licensed with certain Zebra hardware; please consult the individual Zebra hardware datasheets for details. The use of Distributed Aurora Imaging Library within the same physical system does not require the additional specific license. The installation of Aurora Imaging Library for redistribution can even be hidden from the end user. Documentation, IDE integration, and examples Aurora Imaging Library’s online help provides developers with comprehensive and easy-to-find documentation, and online help can even be tailored to match the environment in use. The online help can be called up from within Visual Studio to provide contextual information on the Aurora Imaging Library API. Also supported is Visual Studio’s intelligent code-completion facility, giving a programmer on-the-spot access to relevant aspects of the Aurora Imaging Library API. An extensive set of categorized and searchable example programs allow developers to quickly get up to speed with Aurora Imaging Library, with more available on GitHub® repositories.

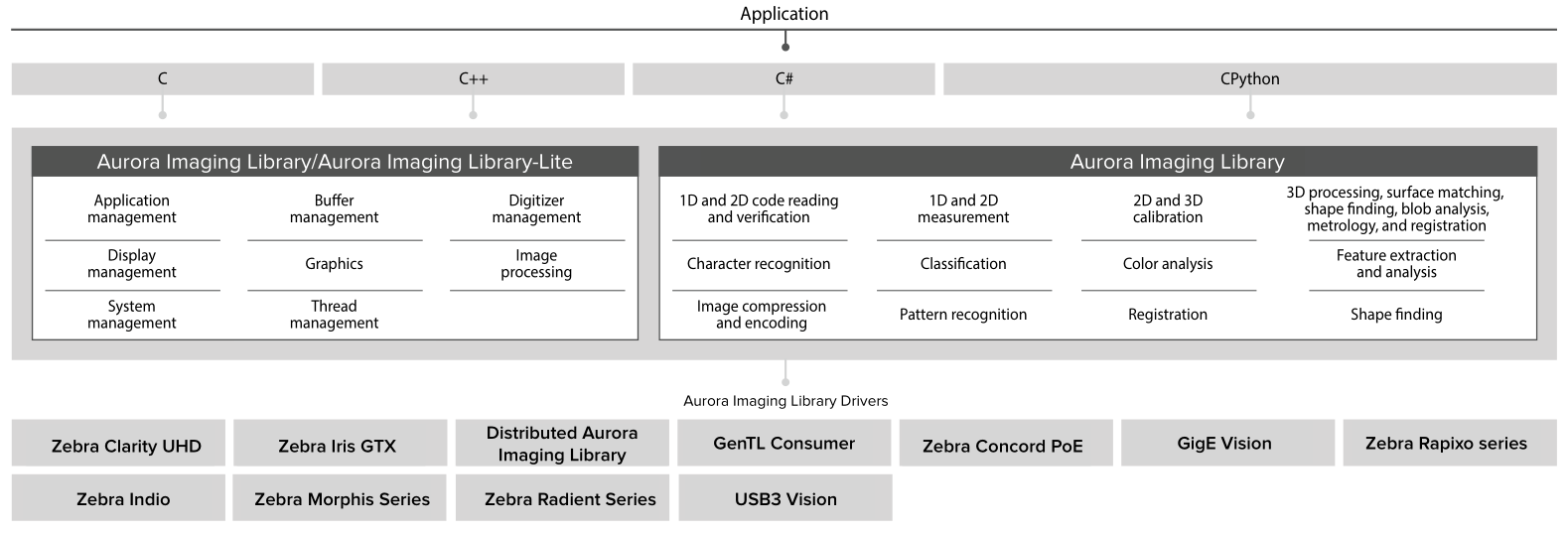

Aurora Imaging Library-Lite Aurora Imaging Library-Lite is a subset of Aurora Imaging Library, featuring programming functions for performing image capture, annotation, display, and archiving. It also includes fast operators for arithmetic, Bayer interpolation, color space conversion, temporal filtering, basic geometric trans-formations, histogram, logic, LUT mapping, and thresholding. Aurora Imaging Library is licensed for both application development and deployment with Aurora Imaging hardware and is available as a free download. Aurora Imaging Library users also get unfettered access to applicable Vision Academy training content and software updates. Software architecture Aurora Imaging Library provides a comprehensive set of application programming interfaces, imaging tools, and hardware support

Supported Environments For Windows:

For Linux:

Aurora Imaging Library for Arm The majority processing, analysis, annotation, display, and archiving functionality in Aurora Imaging Library is also available to run on Arm Cortex®-A family processors, specifically those employing the Armv8-A 64-bit architecture. The processing and analysis functions are optimized for speed using the Neon™ SIMD architecture extension. Aurora Imaging Library for Arm is supported on appropriate 64-bit Linux distributions, like the one from Ubuntu. Image capture can be accomplished using the GenTL, GigE Vision or Video4Linux2 interfaces. Aurora Imaging Library for Arm is available to select users as a separate package upon qualification. For more information, contact Zebra sales. Training and Support: Vision Academy Vision Academy provides all the expertise of live classroom training, with the convenience of on-demand instructional videos outlining how to get the most out of Aurora Imaging Library vision software. Available to customers with valid Aurora Imaging Library maintenance subscriptions, as well as Aurora Imaging Library-Lite users and those evaluating the software, users can seek out training on specific topics of interest, where and when needed. Vision Academy aims to help users increase productivity, reduce development costs, and bring applications to market sooner. For more information, contact Vision Academy.

Professional Services Professional Services delivers deep technical assistance and customized trainings to help customers develop their particular applications. These professional services comprise personalized training; assessing application or project feasibility (e.g., illumination, image acquisition, and vision algorithms); demo and prototype applications and projects; troubleshooting, including remote debugging; and video / camera interfacing. Backed by the Vision Squad—a team of high-level vision professionals— Professional Services offer more in-depth support, recommending best methods with the aim of helping customers save valuable development time and deploy solutions more quickly. For more information on pricing and scheduling, contact Zebra sales. Aurora Imaging Library maintenance program Aurora Imaging Library users have access to a Maintenance Program, renewable on a yearly basis. This maintenance program entitles registered users to free software updates and entry-level technical support from Zebra, as well as access to Vision Academy. For more information, please refer to the Software Maintenance Programs. Ordering Information: Aurora Imaging Library Development Toolkits

Aurora Imaging Library Maintenance Program

Vision Academy Online Training

Vision Academy On-Premises Training

Software license keys

For more information contact CRI Jolanta by phone at: +48 32 775 0371, by email info@crijolanta.com.pl, or by contact form. Software |

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||